I have been entertaining HP ProLiant MicroServer N36L for nearly a year now. Great machine for the money and with cost of around £120 (after the cash back) it was an absolute bargain at the time! Box itself has been upgraded to 8GB of DDR3 RAM (maximum the motherboard can take) and its running ESXi 4.1 U1 absolutely fine. Disk space wise, there is only 30 GB Vertex SSD for few VMs (and .vswp files), rest of the storage needs is provided by QNAP TS-509 NAS (by means of NFS and iSCSI) This setup has been absolutely flawlessly so far but there is simply not enough RAM and CPU power for my needs (or rather my VMs). CPU Ready is going through the roof quite often due to AMD Athlon II Neo 1.30GHz processor which is just slightly better performer compared to Intel Atom range. 8GB of RAM is tight and ESXi was paging the VMs like mad to .vswp files hence why I put them on SSD which helped only to certain extent. At the end I kinda had enough and decided to build a custom server which would address all of the issues above.

Here is what I came up with:

Processor: Intel Xeon X3450 2.66GHz with HT/VT-x and VT-d

Processor Cooling: Corsair CWCH100 Hydro Series H100 Cooler

Processor Cooling Fans: 2 x Noctua NF-P12

Motherboard: Supermicro X8SIL-F-O Server Board

RAM: Kingston 4 x 8GB [KVR1333D3Q8R9S/8G]

Case: Lian-Li PC-V350B

Case Noise Dampening: AcoustiPack LITE (APL) Multi-Layered Soundproof Material

Case Backplate: Custom backplate to incorporate moving PSU to the right and adding 120mm exhaust fan

5.25″ Drive Bay Cooling: Evercool Armour ATX HDD Cool Box HD-AR

5.25″ Drive Bay Cooling Fan: Noctua NF-R8-1800

Case Exhaust Fan [Back]: 1 x Noctua NF-P12

Case Exhaust Fan Guard: 120mm Standard Wire Case Fan Guard Grill [Black]

Power Supply: Be Quiet! BN180 L8 430W Modular PSU

Storage 1: Samsung 830 256GB SSD (main datastore)

Storage 2: Seagate Barracuda 2TB [ST2000DM001] (second datastore)

Storage 3: Vertex 1 30GB SSD (.vswp datastore)

Storage 4: Patriot Extreme Performance Xporter XT Rage 8GB (local storage for ESXi)

Storage Adapter Bracket: SilverStone SST-FP55B (allows 1 x 5.25″ and 2 x 2.5″ in one 5.25″ slot!)

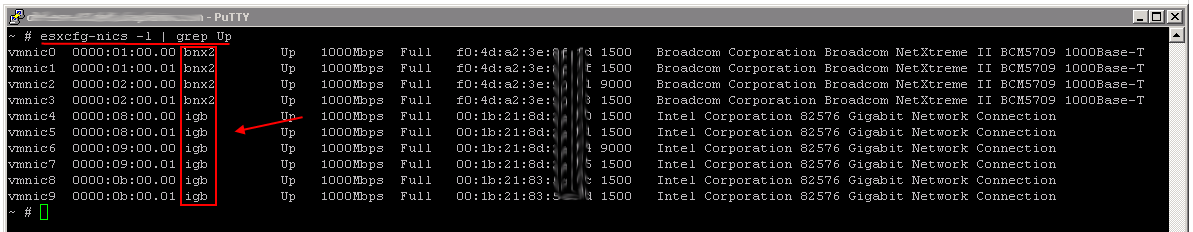

Network 1: Onboard Dual Intel 82574L Gigabit Ethernet Controllers

Network 2: HP NC360T PCI Express Dual Port Gigabit Server Adapter [which effectively is Intel PRO/1000 PT Dual Port NIC]

Network 3: Intel Ethernet Converged Network Adapter X520-DA2, 10GbE, Dual Port

RAID Controller: IBM ServeRAID M1015 [which kinda is OEM version of LSI 9220-8i]

ODD: Toshiba/Samsung TS-H653 20x DVD±RW DL SATA Drive

I will be updating this post as work on the server progresses! Stay tuned.

Project Update #1

Project Update #2

Project Update #3

Project Update #4

Project Update #5

Project Update #6

Project Update #7

FINAL UPDATE

Like this:

Like Loading...