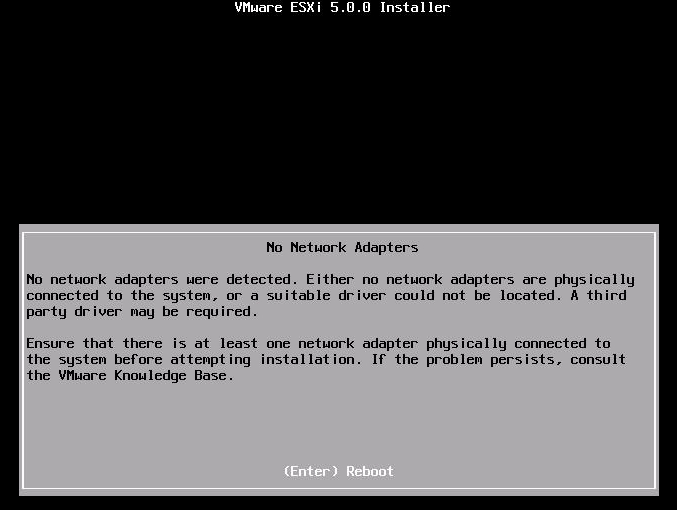

So here we are, lovely Thursday morning at work and requirement for new VM comes up – I’m thinking not a big deal since I have deployed thousands of VMs before but there is a catch this time (there always is!) All of my Windows Server templates are virtual machine HW version 8 and I need to deploy one server to ESXi 4.1 host – great! ESXi 4.1 uses HW version 7 at the most so HW version 8 will not work – if you attempt to add HW version 8 to the inventory on ESXi 4.1 host you will be met by the following outcome:

VM adds fine and without any errors but its grayed out and with invalid status. Not much you can do here apart from removing it from the inventory.