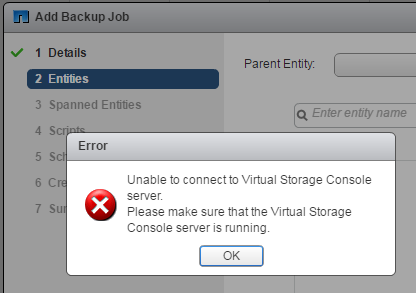

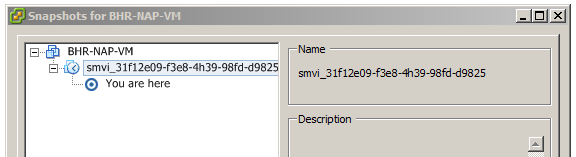

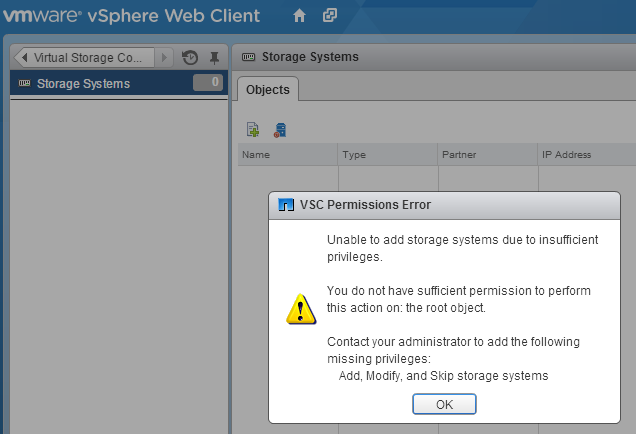

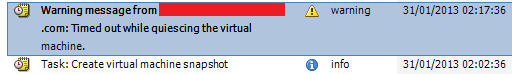

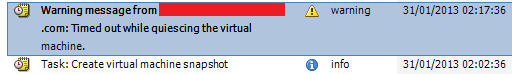

Today meant to be just another ordinary day in the office and for most part this was the case. Around 1PM I have noticed that 2AM NetApp VSC backup job was still running… Bit odd I thought as this never happened before – its normally done in 30 minutes tops. vSphere client was showing 1 VM in recent tasks as being in progress. Hmm so what’s up with that VM then? It looked completely stuck, I couldn’t edit settings, power off, reset etc. Nothing worked. Tasks and events tab was explaining the situation a bit better:

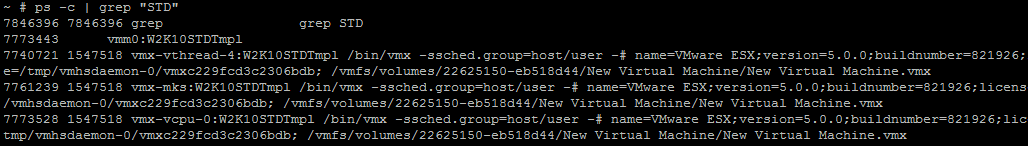

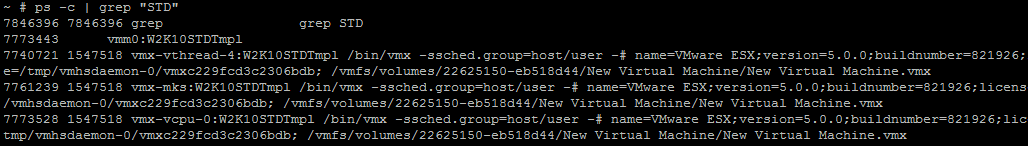

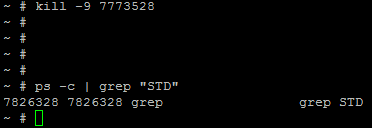

So basically backup started and its stuck while taking snapshots due to being unable to quiesce the file system. Beyond this point vSphere client is pretty much useless so its was time to hit the command line via SSH to get me out of trouble. First you need to know the name of your stuck VM, it doesn’t have to be letter for letter as you can simply search for it using grep in a list of active processes on ESXi host. My VM had ‘STD’ in its name (aka Standard flavor of Windows Server 2012) and to find the actual PID number I’d to run the following command:

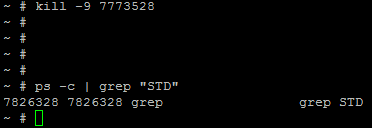

to kill the process (that run your VM) its simply kill command followed by PID number, in my case:

Now it should be gone. Quick check for PID number that we killed shows there is no such PID anymore – good.

At this point my VSC job simply timed out and moved on to backup other VMs in the datastore.

Happy days.

Like this:

Like Loading...